OpenAI Launches GPT-5 Codex-Mini: 4x Cheaper, Almost No Drop in Performance

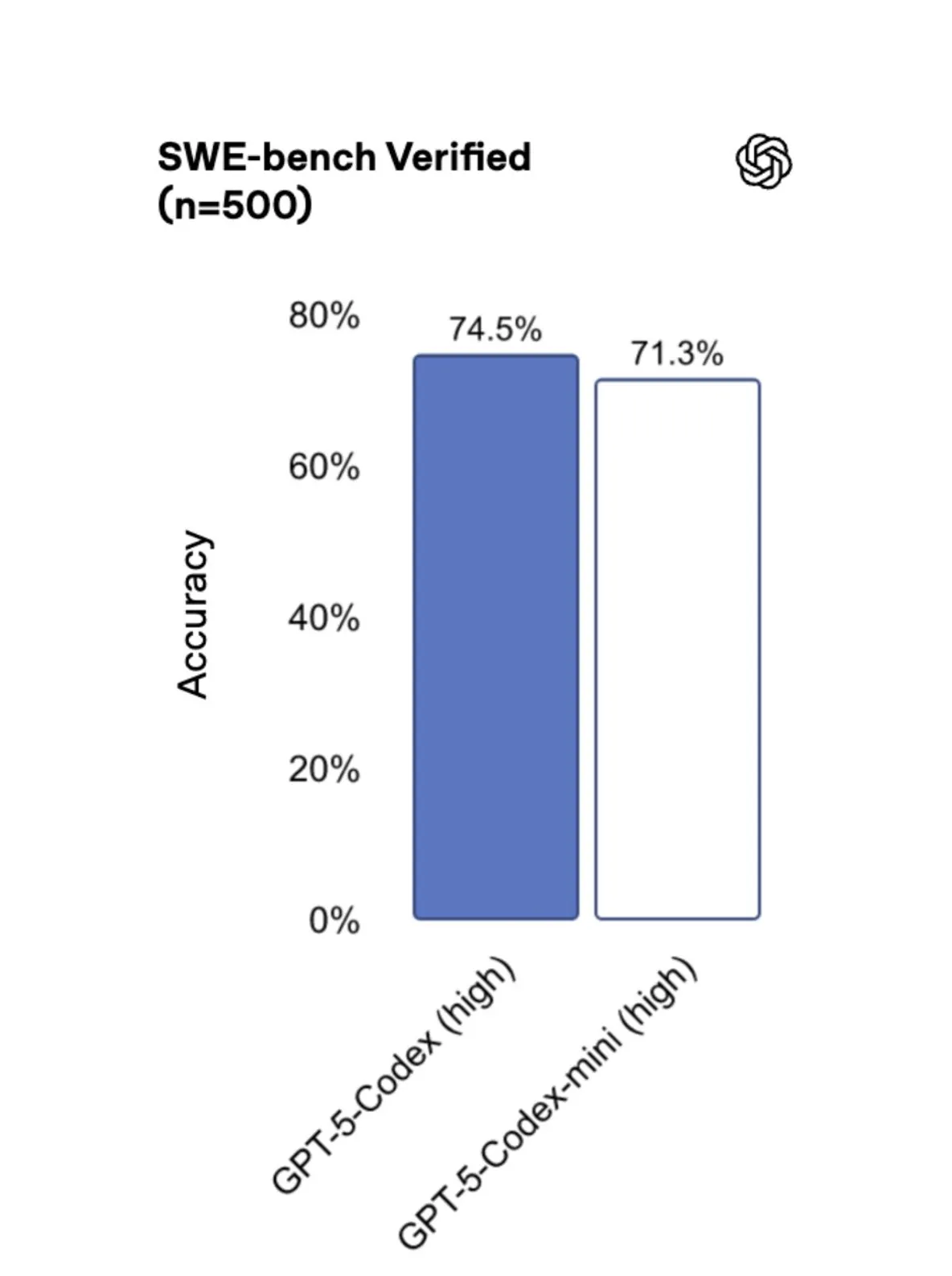

OpenAI has unveiled GPT-5-Codex-Mini, a new, streamlined model designed for developers. This lightweight version of Codex is built for efficiency, handling approximately four times the number of requests as its predecessor with only a minor trade-off in accuracy.

How It Works and Who It's For

Codex-Mini is ideal for simpler, routine coding tasks or for users approaching their request limits. To prevent service interruptions, the system automatically prompts you to switch to the Mini model once 90% of your quota is used. It's now accessible through the CLI and IDE extensions for ChatGPT account holders, with API support planned for a future release.

Key Highlights

- Increased Capacity: Processes roughly four times more requests than the standard GPT-5-Codex.

- Efficient Performance: Optimized for efficient GPU usage, making it a cost-effective choice.

- Enhanced User Tiers: Plus, Business, and Edu users receive a 50% increase in request limits, while Pro and Enterprise accounts get priority processing.

OpenAI positions Codex-Mini as the go-to tool for routine coding tasks, reserving the full-featured model for complex scenarios that demand maximum precision and contextual understanding.